They have native support, and the tables or buckets are specified in this screen. S3 and DynamoDB are unique, in they do not need a connection previously configured like above. As seen below, it supports both internal to AWS services, as well as any JDBC connection, MongoDB and Databricks Delta Lake. The next page is where you configure the actual source that the crawler will crawl. The next page is where the crawler source type is configured, as seen below. Tags description and the security configuration are optional. The only required field is 'Crawler name'.

The page to start configuration is below: Press the 'Add Crawler' button to start configuring one. Click on the 'Crawlers' tab and it will list any that have been previously configured. The crawler is a managed metadata discovery service within Glue. To make tables using a crawler, the crawler must be configured prior. The last is replicating from an existing schema, which is not possible from the first instance of setting up tables. The second is by manual input, which entails physically typing out all the field names, their types, etc. The first and preferred method is using a crawler. The connection can then be tested before saving the configuration (it's best to not skip this step!).Īdding a table can happen in three ways. If not, then it asks for the VPC that the target service is paired with, as well as any pertinent connection info for it like URL, username, password. Once the connection type is selected, depending on what it is, it may ask you to select the instance of the AWS managed service. Glue also supports Kafka streams (both MSK, the AWS managed Kafka) and any generic Kafka cluster you might have self-hosted, and finally a generic network connection. Glue supports generic JDBC, managed connections to RDS clusters, managed connections to Redshift, and managed connections to DocumentDB (the AWS version of MongoDB). The following configuration page comes up: To instantiate this, click on the 'Connections' tab to start this process, and then click 'Add Connection'. Next, to populate the tables section, schema registries or to use the crawler (the other two main functions in Data Catalog) you need to first successfully connect to a data source. This is nothing more than typing a name and saving it. First, navigate to the 'Databases' tab, because a new database will need to be defined. The Data Catalog tab is not the most intuitive when getting started for the first time. The left-hand navigation options show two primary areas of focus: Data Catalog and ETL. To get started with Glue and its data catalog, first go to the AWS console and search for 'AWS Glue'. Also, this type of capability can be used by data architects and scientists to manage slowly changing dimensions of data, and for data discovery at any time. Mainly because there was no traditional management studio or client for HDFS datastores. For larger enterprises who jumped onto the 2010s big-data bandwagon, a data catalog became a crucial tool for data governance and data management. The data catalog can trace its existence back to Apache Hive Metastore in the Hadoop Ecosystem. The detailed decision points will be highlighted later. Despite the hype from AWS and their certified consultants, it is not a panacea for smaller data teams, and can be a burden to maintain. Many parts of Glue can be used by other applications, an example is many AWS services have an option to catalog metadata within Glue this is true for Amazon Athena, EMR, and Redshift.īut there are many limitations of AWS Glue compared to EMR (Elastic Map Reduce) or 3rd party solutions such as Runner. The biggest asset outside of its serverless architecture (no need to manage an EMR cluster) is that many of the data processes can be stood up inside the graphical user interface of the console, without writing code.

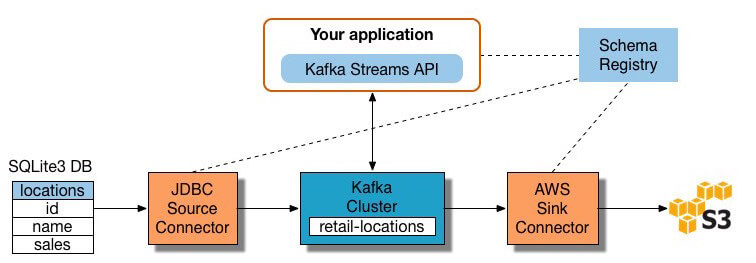

These are services for data that is moved, transformations and managed both within and outside the AWS account. Amazon Web Service’s Glue is a serverless, fully managed, big data service that provides a cataloging tool, ETL processes, and code-free data integration.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed